News

Technology

Media & Entertainment

Mobile

VR

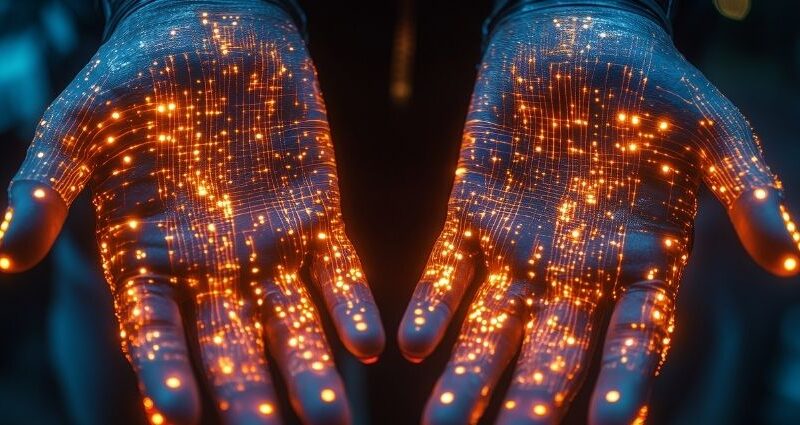

Beyond Headsets: How Wearable Haptics Are Reshaping the Future of Work

While extended reality (XR) headsets are already making a significant impact in the workplace, wearable haptics are poised to take enterprise immersion to the next level. From training and collaboration to remote operations and design, haptic wearabl

Latest Article

Putin Projects Defiance and Global Reach at Victory Day Parade Amid War Stalemate

Joined by leaders from China and Brazil — and saluted by North Korean generals — the Russian president seeks to portray strength and global releva

The Algorithmic Tightrope: Big Tech, AI, and the High-Stakes Balancing Act

As Big Tech tightens its grip on AI, society is teetering on a precarious edge — caught between groundbreaking innovation and the urgent need for ac

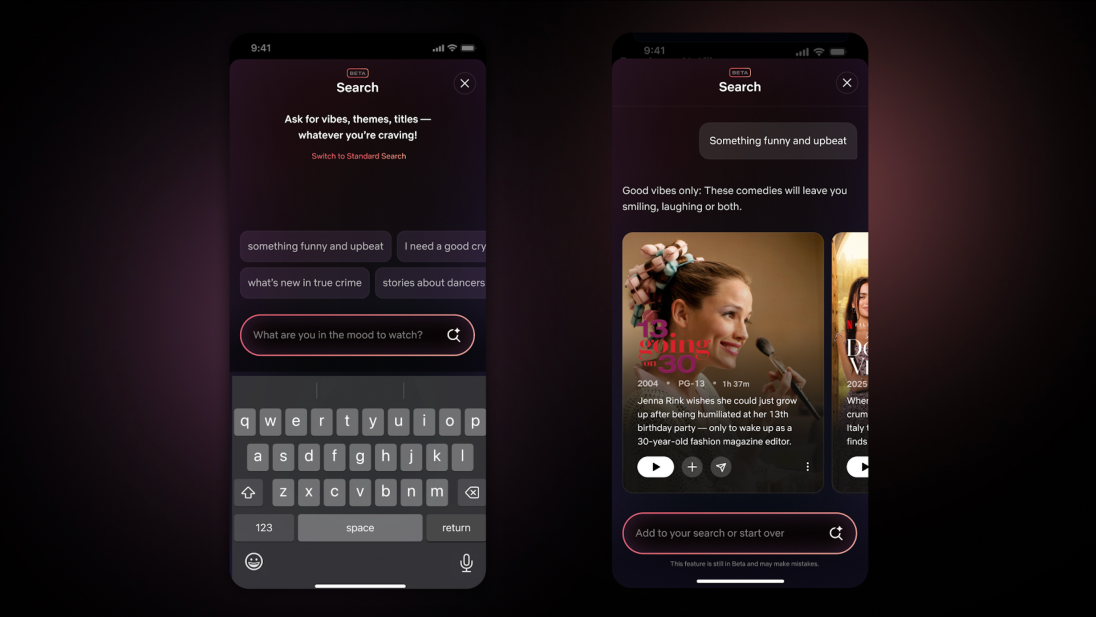

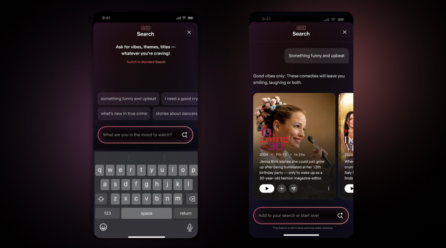

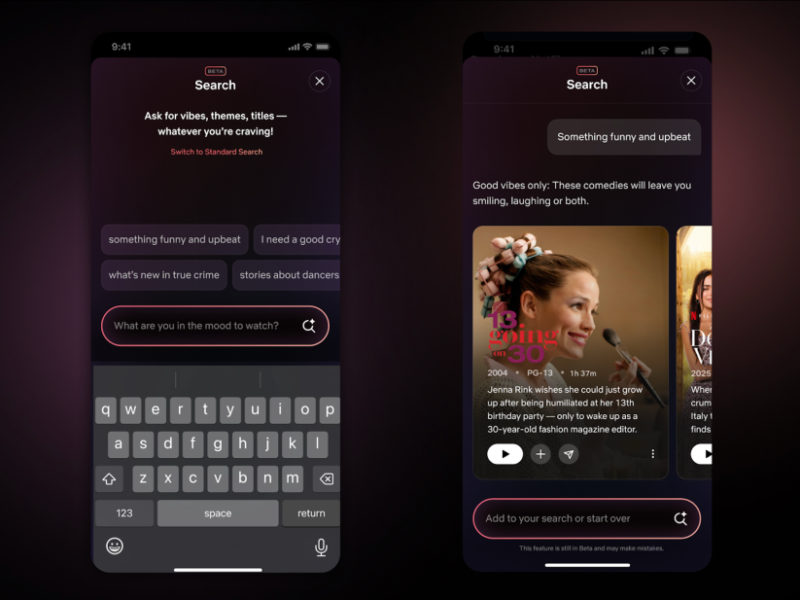

Netflix Launches ChatGPT-Powered AI Search Tool

New conversational feature lets users find content with natural language queries Netflix has officially unveiled its new AI-powered search tool, offer

Beyond Headsets: How Wearable Haptics Are Reshaping the Future of Work

While extended reality (XR) headsets are already making a significant impact in the workplace, wearable haptics are poised to take enterprise immersio

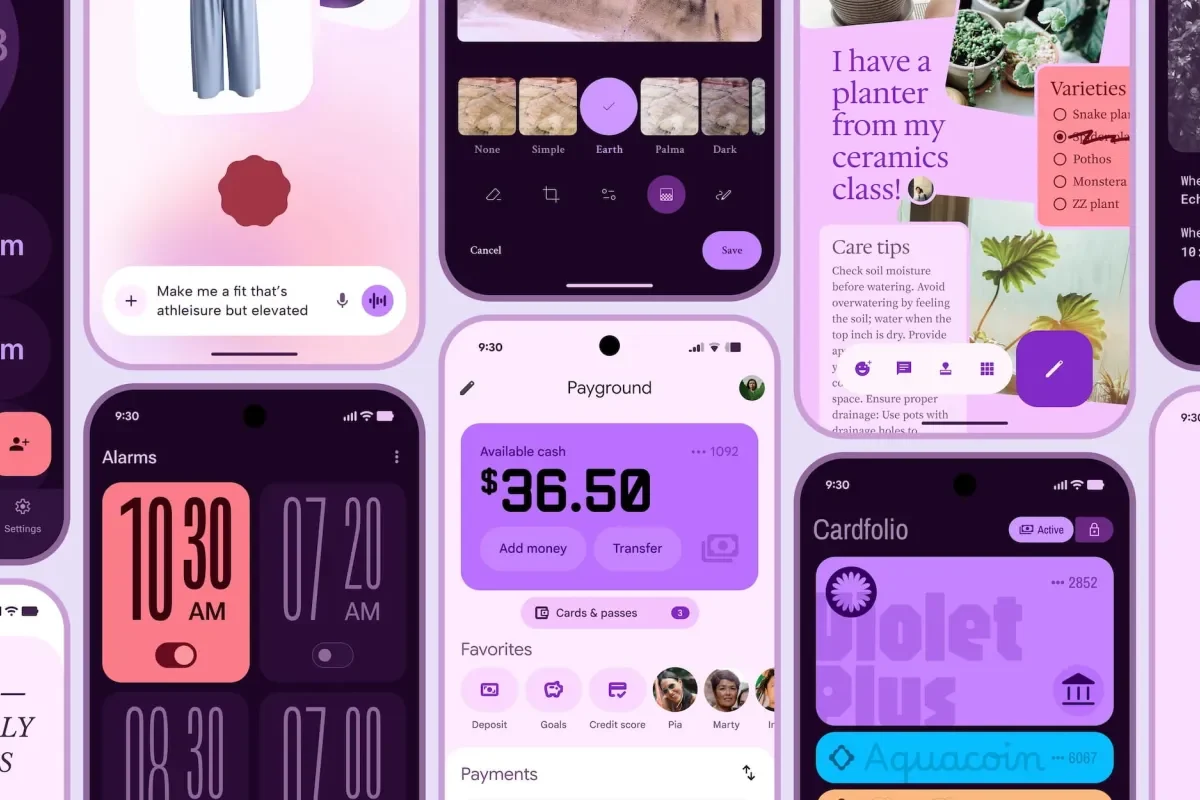

Google’s Massive Android Redesign Looks Great — But Who’s Going to See It?

Google seems poised to unveil its most significant Android redesign in nearly a decade — but a key question remains: will anyone actually notice? Th

Trump Threatens New Sanctions on Russia if It Rejects Extended Cease-Fire

The former president made his ultimatum following a call with Ukraine’s Zelensky, signaling a pivot back toward Kyiv’s terms amid fragile nego

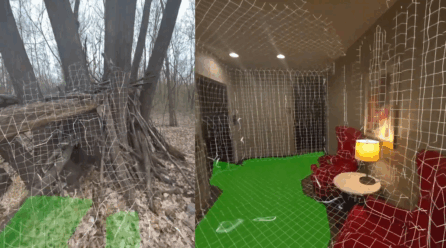

Developer Brings Continuous Scene Meshing to Quest 3 with Lasertag

A developer behind the multiplayer mixed reality game Lasertag has implemented continuous scene meshing on Meta’s Quest 3 and 3S headsets—eliminat

How Will Pope Leo Approach the Rising Right Wing of the U.S. Church?

The first American pope steps into a fractured Catholic landscape in his home country, amid rising conservative influence and global challenges.The su

Mobile Apps: A Growing Target for Hackers Due to Private Data

Mobile applications are becoming prime targets for cybercriminals, and the reason is clear: they store vast amounts of personal data. In fact, approxi

Google I/O 2025 Preview: What to Expect from Android 16, Android XR, Gemini, and More

Google I/O 2025 kicks off on May 20, and all signs point to one of the most packed developer conferences in recent memory. From Android 16 and new XR